A recent significant data breach at GenNomis, an AI image-generation platform operated by the South Korean firm AI-NOMIS, has raised pressing concerns regarding the unregulated risks associated with AI-generated content.

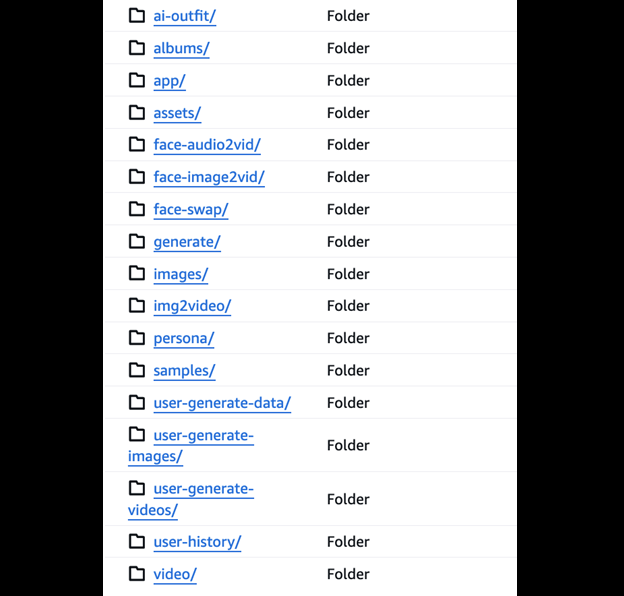

GenNomis enables users to create diverse images from text prompts, generate AI personas, and engage in face-swapping techniques. It boasts an extensive range of over 45 artistic styles along with a marketplace dedicated to the buying and selling of user-created images.

What Data Was Exposed?

According to a report from vpnMentor, cybersecurity expert Jeremiah Fowler uncovered a publicly accessible and poorly configured database containing approximately 47.8 gigabytes of data, comprising 93,485 images and related JSON files.

This data revealed a concerning repository of explicit AI-generated material, including face-swapped images and representations that suggested the inclusion of underage individuals. An initial analysis indicated a significant prevalence of adult content, highlighting alarming implications about potential child exploitation risks.

The incident aligns with ongoing warnings from a UK internet safety organization, which reported that perpetrators on the dark web are increasingly leveraging open-source AI tools to create child sexual abuse material (CSAM).

Fowler reported encountering numerous images that appeared to involve minors in explicit contexts, as well as depictions of celebrities represented as children. The database included JSON files detailing command prompts and links to generated images, providing insight into the platform’s operational mechanics.

Aftermath and Dangers

Fowler noted a startling lack of fundamental security measures, such as password protection or data encryption, within the database. However, he emphasized that his findings do not imply any intentional wrongdoing by GenNomis or AI-NOMIS.

Upon discovering the breach, Fowler submitted a responsible disclosure notice to the company, leading to the subsequent removal of the database following the temporary shutdown of GenNomis and AI-NOMIS websites. Notably, a directory labeled “Face Swap” was deleted prior to this disclosure.

This incident underscores a growing issue surrounding “nudify” and Deepfake pornography, where artificial intelligence is utilized to produce realistic explicit imagery without proper consent. Fowler highlights that approximately 96% of Deepfakes circulating online are pornographic, with a staggering 99% depicting women without consent.

The exposure of such sensitive data poses substantial risks, including the potential for extortion, reputational damage, and scenarios of revenge targeting affected individuals. Additionally, this breach contravenes GenNomis’s explicit policy against generating explicit content involving minors.

Fowler characterized this data exposure as a crucial indication of the risks present in the AI image generation sector, emphasizing the urgent need for enhanced responsibility among developers. He advocates for the implementation of proactive detection systems aimed at identifying and obstructing the creation of explicit Deepfakes, particularly those involving minors. Furthermore, he underscores the necessity for robust identity verification and watermarking practices to mitigate misuse and encourage accountability.

As of the latest updates, the GenNomis website remains offline.